The original interface was developed for iOS device only using MRMR widgets. The new version of Moori interface is designed for mobile phones and will be available for any smart phones.

Chiptune music workshop, Worldtronic Berlin Dec 3rd 2011

Little Sound DJ is a tracker based software used in Game Boy system developed by Johan Kotlinski. This tutorial is for beginners to intermediate users. You can find more information on Little Sound DJ at http://littlesounddj.com/lsd/

Sound Design 1 is offered at Parsons the New School for Design. It provides an overview of nonlinear audio production and sound culture with an emphasis on integration with other narrative formats. In this introductory-level course, students gain familiarity with the basic tools and techniques of nonlinear audio production. Projects improve listening skills, raise awareness of our aural experience and sonic environment, integrate sound with narrative visual media, and allow us to communicate and conceptualize with sound.

Check out our class blog. http://www.bubblyfish.com/SoundDesignFall2011

Official Moori site, http://www.bubblyfish.com/thesis

Moori allows audience members to participate actively in a performance. By incorporating a smart phone’s dynamic interface and SMS, users share their thoughts to proposed questions by a performer. Through text inputs, buttons, and multi-touch pads, user-data is processed to generate algorithmic audio and visuals – as well as manipulate the performer’s work. The result is a collaboration between performer and audience, a real-time audio-visual composition and a dramatic narrative. Moori is ongoing research and performance project originally developed for MFA Design & Technology thesis.

iOS device User Interface System Diagram

Excerpt from Moori

Crowd police

This project is created with openframework and camera vision. A PS3 camera is mounted on the ceiling and captures movements in the room. The camera field is mapped to a computer screen and becomes the force that changes the vector field. Meanwhile, the movement of the animated character follows noise field, and when the character detects forces in vector field near by, it follows the vector field instead. The character is created with particle and spring system. The vector field is indicated with blue lines, and moving blobs are movement of people. The noise field that the character’s movement is based is invisible.

PS3 camera testing

Wearable technology, Arduino, physical computing, ubiquitous computing.

The system integrates a wearable user interface for electronic music for audience members. It allows a performer to communicate with an audience through the wearable device by sending signal to evoke gestures from audience members. The audience movement data is fed back to the main system and affects the audio-visual performance. With the system, audience members act as active participants and the concert experience can be more interactive and immersive as a whole. Moori creates a dialogue between performer and audience members to connect with each other and a performance becomes a conversation within.

Design Question

Could a musical performance be enhanced if other activities are integrated? People attend live performances in addition to listening to prerecorded music because going to a concert provides a unique experience beyond just the musical content. The experience may come from the concert space they occupy, interactions between the crowd and the performer, or occurrences among audience groups. As I observe the crowd at a concert, I see people who are deeply absorbed, who are just being spectators, and who are not engaged in the performance at all. Would it be possible to introduce extra elements into a performance which might draw in audience members? I believe providing an objective and a role that audiences can be a part of would captivate and create a memorable experience.

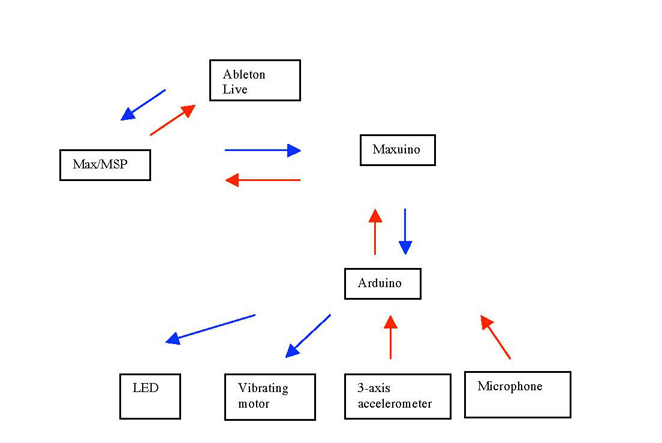

System Diagram

Is it possible for the communication between an artist and audience to be enhanced through an unorthodox method? Moori is conceived from this notion. Audience members are given an active role through a wearable interface and a playful act. Their effort is reflected in the performance via sound or visuals. The embodiment experience is obtained with the wearable interface distributed to each participant. Moori is specifically designed for electronic music in an audio-visual performance setting for approximately 50 people.

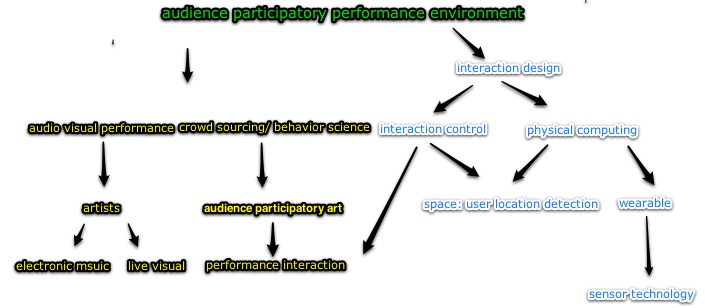

Domain Map

Wearable and music – 2 way communication

The audio 2-way communication system is built in Max/MSP. Music, composed in Ableton Live as midi and audio format, sends a midi signal to Max/MSP. Then, the signal is sent to Maxuino, a Max/MSP application that allows Max to communicate with other hardware via a serial port. This sends an OSC message to LilyPad Arduino to control the LED and vibrating motor. Conversely, the 3-axis accelerometer records the movement of the user’s arm, and the returns the movement data back to Max/MSP via Maxuino. Max/MSP then converts the movement data into midi control data, and sends values to Ableton Live to control the audio effects and parameters.

Category: physical computing.

Summer 2010, I interned at Makerbot Industry, DIY 3D printer maker. Here, I researched on creating music on 3D printer using python script. The printer has three motors moves in x, y, z axis. The software to create a 3D object is called Gcode, a proprietary code similar to Arduino code. Each composition is written in midi sequencing program, then converted with a python script written by TeamTeamUSA called mid2cnc.py. This is an original song I composed for a Makerbot, titled, ‘Lullaby for MakerBot”.

Lullaby for a Makerbot from haeyoung kim on Vimeo.

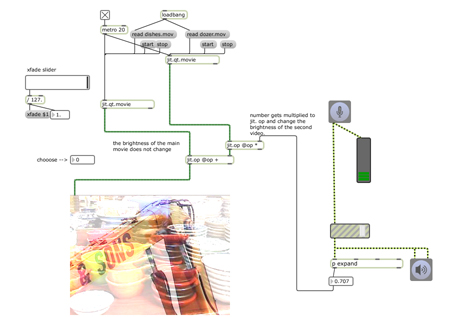

video manipulation using call and response technique

The idea is based on the notion of the call and response technique in a live concert. The system takes a microphone input for video playback control. Microphones are embedded on the wearable as well as set up on the stage. When the input level exceeds a designated threshold, it triggers a video signal. When the input level drops, the video fades out. As the participants learn the interaction, the performer integrates the audience shouting into the performance and continues to choreograph the shout-a-long. This code is based on Robert Ramirez’s max/msp example.

In many audio visual work, artists explore ways to connect sound and visuals. What about letters and words? What kind of sound can represent a meaning of a word? This was more conceptual approach to explore a new relationship between a word and sound. This additive synth is created with keyboard input. Each letter characters are mapped to a specific frequency. As they are typed in together, they create an additive synth. The characters are parsed by ‘.’, ‘return’ keys. If these special keys are entered, the sound is muted and previously entered text becomes removed. This was my first exploration of the narrative and audio visual. It led me to create my thesis project, Moori.

Text input particles from haeyoung kim on Vimeo.

Particles interact with live camera image. As I, being in the camera field, push particles around, the particles bounce off of me. The particles are reset by sound input.

Camera particle interaction from haeyoung kim on Vimeo.

FLEUR (Flower – LED – Email – Uninotification – Receptacle) is an ambient, calm answer to the discomfort of current email notification systems that uses an interface that recalls nature. This project is conceived by Pritika, Aaron, Haeyoug, and Garrett.

A user can mark their gmails into different categories, and each flower stem represents a specific email category. When a new email is received on those categories, Python script detects the unchecked emails, and sends a notification signal to Arduino via wifi shield. Then, Arduino turns on LED’s on the designated flower, and as the user checked the email, the LED turns off. Click here for the presentation.

What if a machinery or a robot have a emotion that is not manipulated or trained by a human? Fugu is a conceptual shy robotic blow fish that floats in its habitat. She is shy and scared easily by loud sudden noise and movements ad prefers to float close to ceilings, away from ground noise. When scared, she inflates and drifts away from the source of disturbance. She is curious about human beings but usually scared to approach them. She comes close to them when they are quiet and appear stationary and descends to the eye level in the room. It has a chirpy personality when it is comfortable in his habitat and likes to make chirpy noises. This was a collaboration project with pritika, annmarie and garrett.

To see the presentation, click here.

Particles are following the direction and position of the vector field created with mouse drag.

vector field 2 from haeyoung kim on Vimeo.

The size of line changes according to the force of the vector field.

vector field from haeyoung kim on Vimeo.

When you click and drag mouse, small red spheres fall and leave trails. When they hit the boundaries, they bounce off.

sphere trails from haeyoung kim on Vimeo.

Fireworks created in openframeworks. The image is regenerated in every 5 sec. Nice screen saver for your mom.

Fireworks from haeyoung kim on Vimeo.

This audio visualizer is generated in Openframeworks.The technique is inspired by John Whitney . The music is an excerpt from Morph 02.which I composed in 2004.

Morph02 from haeyoung kim on Vimeo.